So, I’m in Unity and I’m trying to create an alpha mask based on a gradient from one object to another. Basically, if anything is behind this object with the gradient texture, I need the black and white value of that gradient to determine the alpha channel in the fragment shader of the object behind it. I’m still a little new to writing shaders, and am trying to figure out how to get the color information from one shader to another. On top of this, I need it to adjust from world space to screen space of the mask AND the masked object, so there will probably need to be some vertex to fragment conversion …that involves both shaders. Any help would be amazing. Thanks!

Are you doing this in a text shader? Or in a shadergaph?

Text. HLSL with Universal Render Pipeline to be exact. But am open to ShaderLab if there is an example. I can always just translate it to code once I get it working.

I’m not 100% sure I get the use case. You want a foreground object that works like a lens or portal, so that objects behind it become transparent?

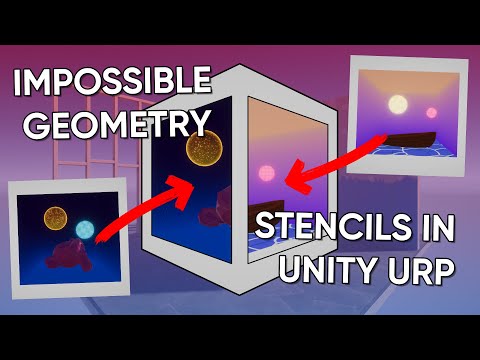

That sounds like a job for a stencil buffer, combined with a render layer that makes sure the stencil is populated before the background object renders:

Or am I missing something?

Can’t be stencil. I need alpha to change based on a black and white gradient. I think I found something in the asset store. Digging through the code now to see if I can adapt it to my needs:

It’s easy enough to control the alpha per object – it’s the inter-object communication that will be tricky.

An alternative in URP would be to add a second camera and have your “lens” object render only into that camera with an unlit shader that represents your gradient value (you control this with the camera’s culling mask). The second camera renders into a render texture. The main rendered object reads from that render texture to control its own alpha, using screen space coords instead of UVs

Interesting. I assume I’d still need to alter any masked shader I want to adjust though to give access to the alpha masking information.

You’d write the masked shader so it took a texture input , and then pass the render texture to the material as that texture. Since you can make the render texture as an asset on disk, you can set it as the default texture. At least if you do that in ShaderGraph shaders you can set it up so there’s no need to manually override. I think the same is possible in text shaders but I don’t recall the syntax off hand.

Hmm. I’ll have to dig more into this. I need it to work with particles, UI, 3d, and sprites.

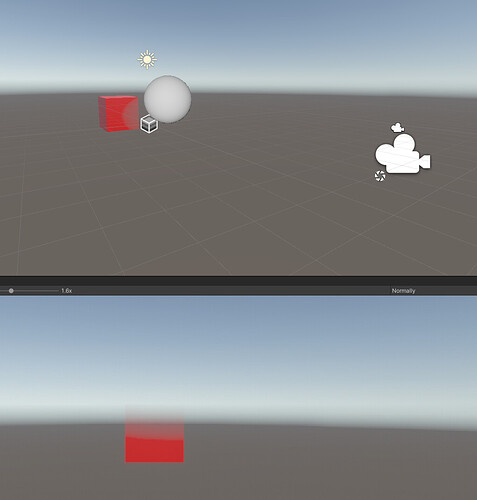

Hey @Theodox could I see more of your setup in the hierarchy? Still learning about how to use a second camera and how you’re passing the information to the main camera after applying the mask. This might be the best way to do this if I understand it correctly. Would prevent me from having to make new shaders for all of my objects. Thanks!

In this case I just had two vanilla camera, one parented to the other and using the same fov settings. It’s not exactly like real “camera stacking” – in URP cams that render to separate render textures will render before cams that render to the frame buffer so no extra work needed to make sure the render tex is available during the “real” render pass… So it’s really just two cameras, and if you parent the second under the first and zero it’s transform you can be sure they are seeing the same things; if you needed to change FOV on the fly you might need to make sure that you do it to both cameras at once, but that’s uncommon.

In text shaders, you can add the reference to the texture without a corresponding property block and use Shader.setGlobalTexture to assign the correct render target from code – that’ll get all shaders that have that property. So your shader would just include a line like

Texture2D _MyPeekabooTexture;

and then your code would call something like:

RenderTexture peekaboo = (RenderTexture) AssetDatabase.LoadAssetAtPath("path/here");

Shader.SetGlobalTexttur( "_MyPeekabooTexture", peekaboo);

which should set the correct reference for all shaders that have a _MyPeekabooTexture in them. Haven’t had a chance to test that exact sequence but it should be something along those lines