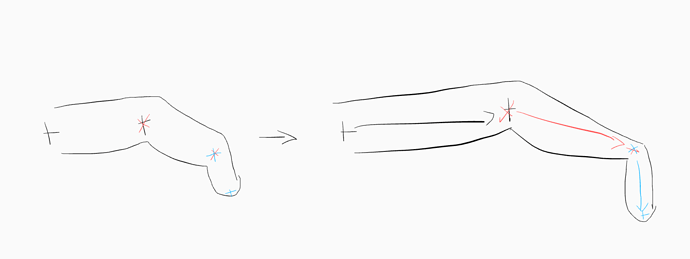

I’m trying to help somebody rig up an hand mesh for use in XR applications. The input data come from hand tracking as oriented points – and the points reflect the size of the wearer’s hand. This means that the hand skeleton joints will translate to accommodate different size hands… but not scale. For some bones this creates odd effects, because there’s not easy way to smooth out the juncture of two bones that have been moved relative to each other but not scaled… the artist painting the weights is kinda confused.

Anyone have some quick pointers I could pass along?